Presentation

I chose a presentation slot on thursday so that i would get a chance to see the others present on tuesday first. Which was good because i got a better understanding of what was expected after listening to them present and the feedback that they got. i found sodas topic really interesting and could relate to whatever she said about focussing too much on technical aspects or outcomes.

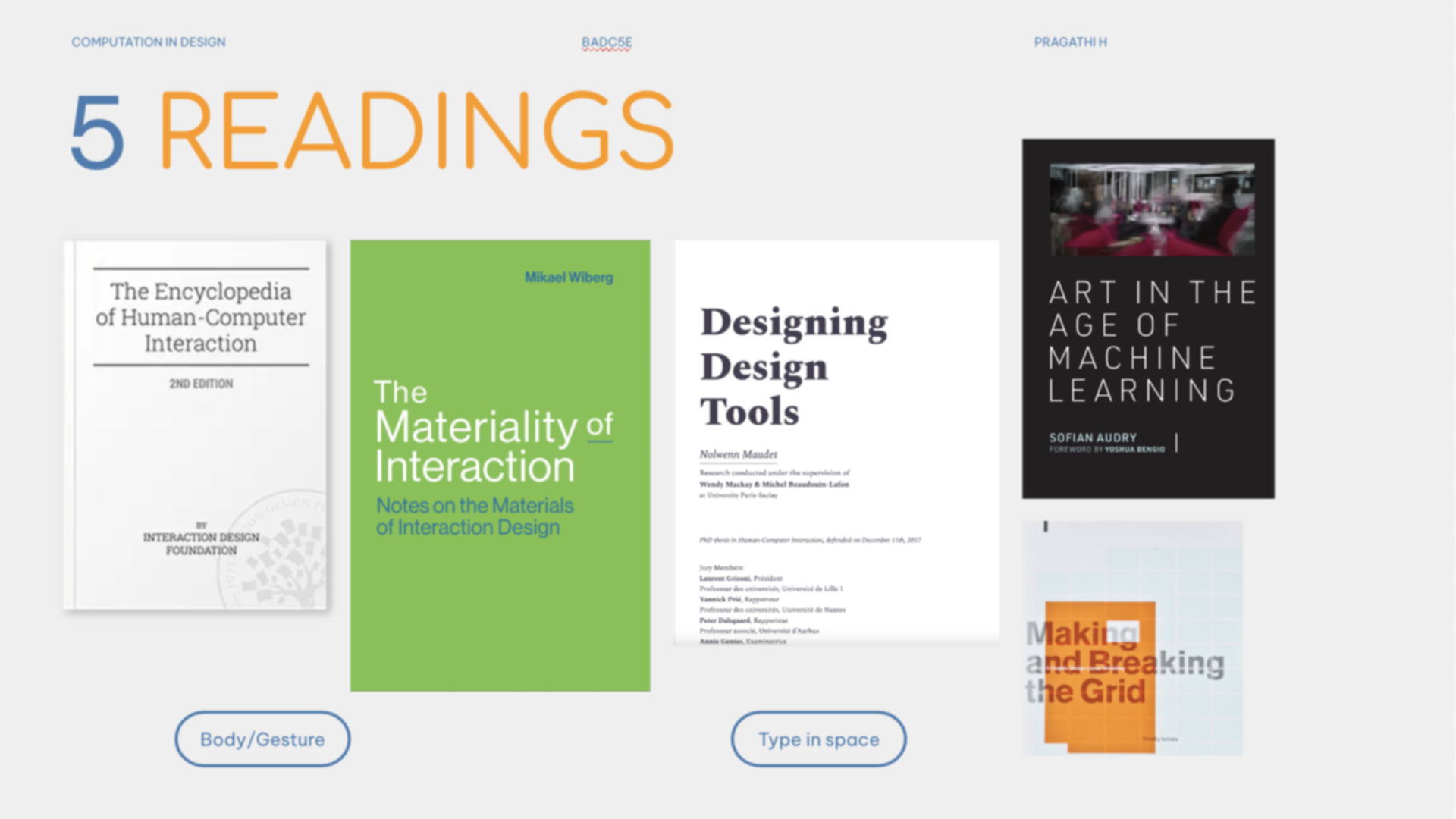

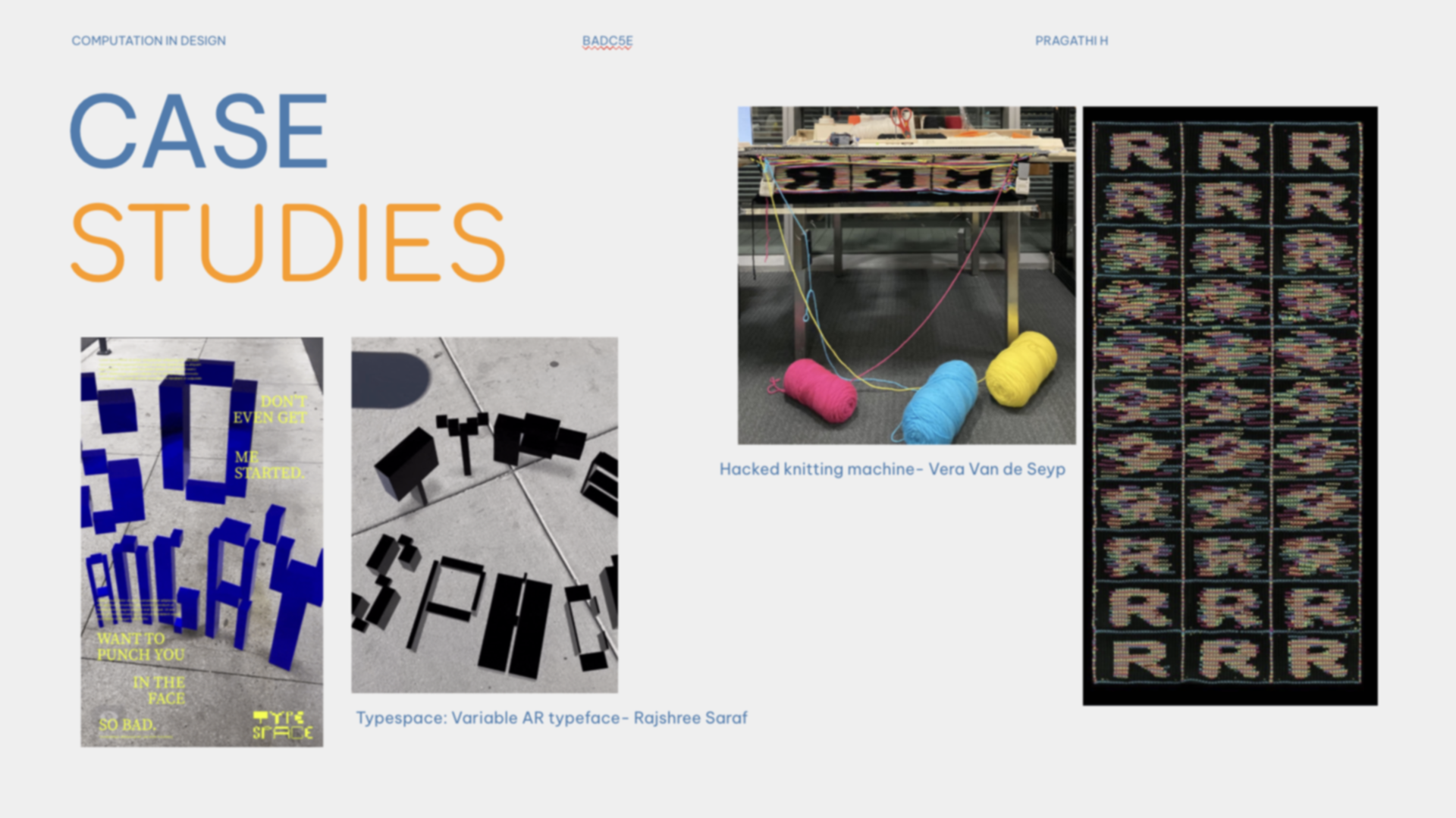

My presentation on thursday went fairly well I'd say, these were some of my slides. The feedback i got was mostly that my chosen topic was very broad, one could potentially choose multiple different parts of it and develop them into seperate fyps. This meant I would need to get more specific about what kinds of gesture i want to explore, am I thinking of typography in the sense designing typefaces or typography thats more explorative and fun? with respect to gestures, do i mean movement in dance/ performance or intuitive uncontrolled gestures, etc.

Experiments

For our practical work, we were asked to make something- it could be anything, that would act as a starting point to talk about next week. I had always wanted to experiment with the ml5.js library, since i found out about it and played around with the sketchRNN module one day. I decided to jump right into it and build something with the handpose function to test it out. I have to say the library really lives up to its tagline- friendly machine learning for the web. The documentation, tutorials and examples were so easy to use and play around with.

I used the handkeypoints detection example under the handPose model. I was quite surprised to see that it was able to detect around 21 key points of my hand very accurately. I was really curious to find out how exactly the model worked, so I asked my best coding companion: chat gpt. I found out that it was based on another model called tensorflow by google. Tensorflow is a deep learning model that uses a neural network that's trained on large datasets of images of hamds to detect and locate the key points. I had learnt and read a lot about deep learning and big data in the data class i took at MICA so to see it in application was really fascinating. Something new i learnt was that apart from the x, y coordinates of the keypoints, the model also detects a z coordinate which depends on how far the hand is from the camera.

After understanding a little about the functions and capabilities of the model, I tried to build something simple using it. I had some difficulties setting up the library in my html file, probably because the version I had used before to try out was older, but it was simply a matter of copying the right CDN link. I've currently been intrigued by a lot of glitchy generative visuals like this ____. I wanted to try building something like it so for the first iteration of this sketch I used the random function to generate multiple squares of different colours around the keypoints that were being detected. I did this using 4 loops nested inside each other: 1 to run through the array of hands detected, 2 to run through every key point detected, 3 and 4 to assign x and y coordinates to the randomly coloured squares.

I liked how the outcome turned out for a first try. I had fun testing it out making different gestures to see how well the model would detect and perform. I observed that since the grids that the squares were forming for each keypoint were a fixed size, when i moved my hands closer to the camera, they were more spread apart than when my hands were further away. The size of the grids also made my fingers look chonky and fat which looked funny sometimes. I also realised that the image on screen was mirrored, and that the random colours kept updating for every frame. These were things I wanted to address for later, but for now, this was a good starting point.