Digging deeper

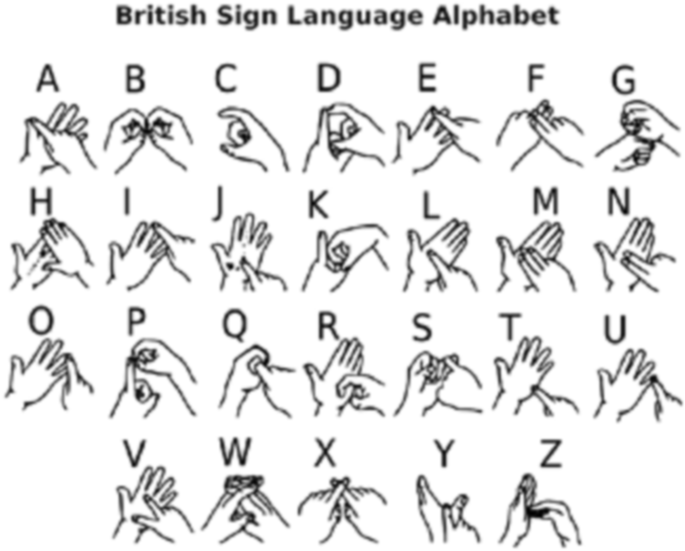

My week began by trying to dig deeper and find readings related to gesture and interaction. A ot of readings I found on hand gesture were about hgr- hand gesture recognition, and were very technical in nature. Most of them, like this- were written by students or professors with engineering backgrounds and focussed on building a successfull hand recognition or hand point recognition model. While these readings were insightful to learn how models like mediapipe or ml5 were built, I wasn't sure how much these readings would influence my thinking or writing. I was looking for theories that would support my thinking or proposal of using gestures as a mode of interaction between human and machine. Andreas said that I could look into papers or articles published about new media projects that use hand gesture, maybe that's the way to go forward.

Echoes for Tomorrow

I started by looking at what technology/gadgets were available to work with. When andreas briefed me he mentioned having a camera, 2 screens and a few sensors I could use. I was leaning towards using some sort of hand gesture as an input since that was what I was reading about currently and wanted to start with my practical work for my catalogue of making. My initial ideas revolved around humanising the machine by making it react to the presence of a human, or the waving of hands. I was inspired by how pets react to their owners coming home and wanted to create a similar interaction with a machine to show a "soft" side of it rather than the usual cold, always perfect, cannot make mistakes side.

The next step was to figure out some technical hurdles like what software to use, how I would get my work to disploay on 2 screens, what would be displayed on each screen/ would it be mirrored or 2 different things or 2 related things, etc. I was getting a bit frustrated with trying to come up with ideas for the visuals so I just decided to start following tutorials and making stuff and hopefully that would lead me somewhere. I'd never tried this approach before but since this was a fairly new software i was experimenting with- touchdesigner, I thought this would work better than having a clear strict vision in mind.

While trying to find a way to do hand tracking on touchdesigner I came across the mediapipe framework by google. It seemed pretty powerful from the tutorials i watched that used it on touchdesigner and i later realised that there was a version of mediapipe compatible with the web that i could use with javascript as well! It basically contains ready-made models for things like detecting faces, hands, poses, and objects. SO COOL! Andreas called it "ml5 on steroids" haha.

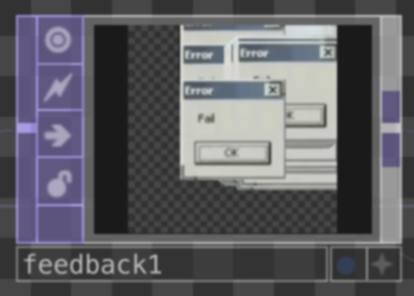

Like I said, I was pretty new to touchdesigner, the only time i used it before was for a project during my internship which was also a lot of on the spot trial and learning. I followed this tutorial and this tutorial and combined them to build a simple instancing network using mediapipe's hand detection model. I was introduced to the feedback chop which was pretty cool- its basically the equivalent to loops in code.