Multimodality

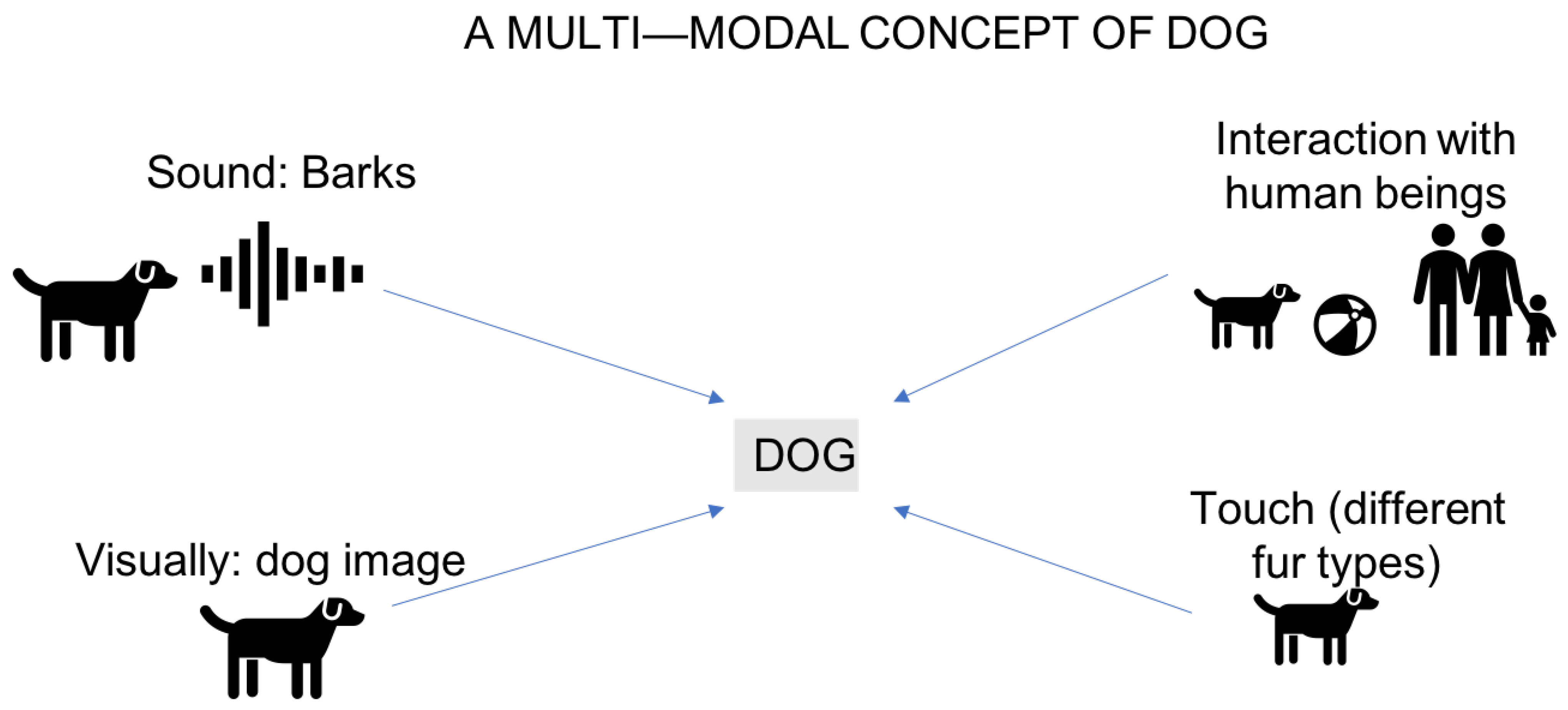

Another intriguing concept from The Implicit Body of Performance by Nathaniel Stern is multimodality in interactive experiences. Multimodality refers to the use of multiple modes of communication—such as speech, gesture, touch, and visuals—in a single interaction. It emphasizes how these modes work together to create richer, more immersive experiences. By combining different forms of input and output, multimodal systems aim to mimic the natural ways humans interact, making them more intuitive and engaging.

To further explore this concept, I’ve been reading works like Bernsen’s "Multimodality Theory," Bongers and Van Der Veer’s "Towards a Multimodal Interaction Space," Gibbon’s "Gesture Theory is Linguistics," and Jaimes and Sebe’s *"Multimodal Human-Computer Interaction: A Survey." These texts are helping me deepen my understanding of how multimodality applies to interactive design and human-computer collaboration.

Echoes for Tomorrow

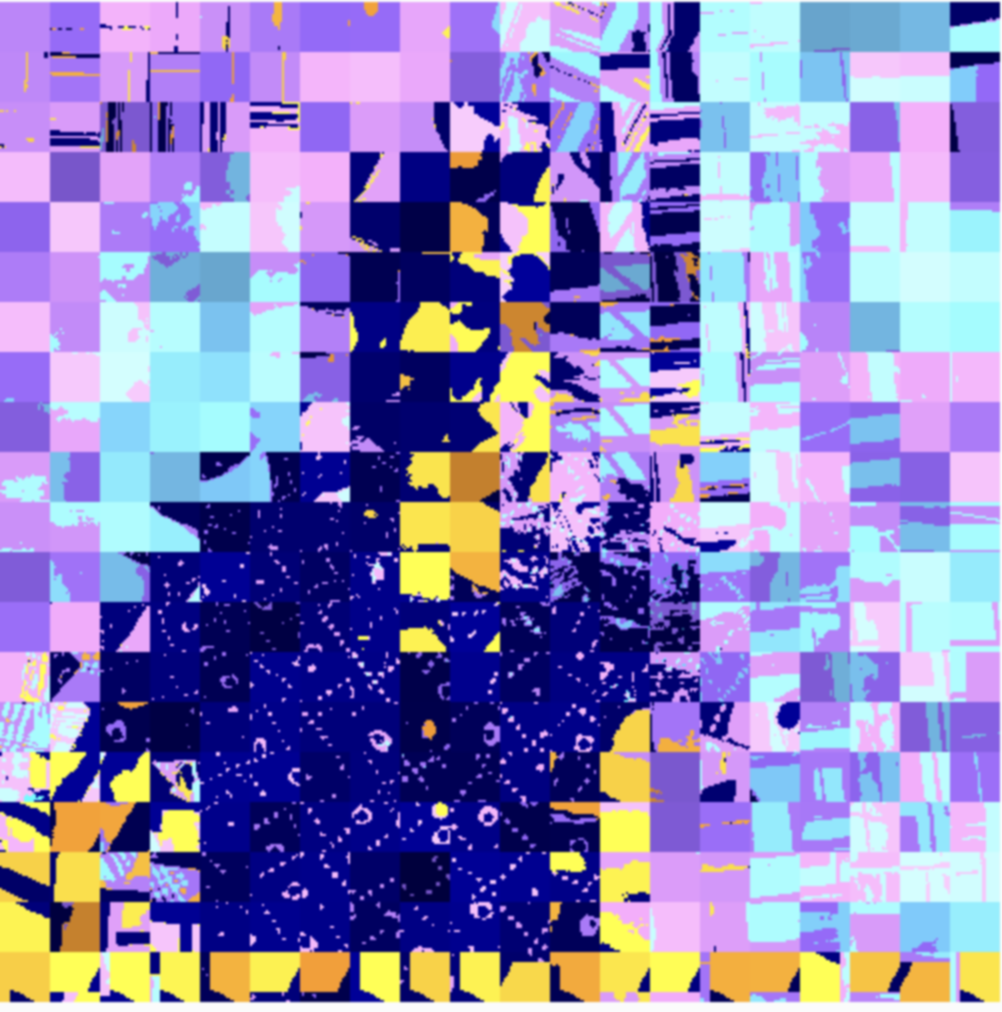

With the exhibition opening just a week away and TouchDesigner off the table, I shifted gears to create two new sketches for the screens using p5.js. Thankfully, I already had a clear vision of the visuals I wanted, so I dove right in. For the first screen, the goal was to recreate the visual style of the exhibition poster that Andreas had designed. Unfortunately, he couldn’t find the original sketch he used, so I had to improvise and replicate it myself. I decided to divide the webcam feed into grid cells, tweak their saturation and color levels, and randomly rotate a few cells by 90, 180, or 270 degrees. The result was a dynamic, print-like effect that reminded me of screen prints or risographs.

To take it a step further, I integrated ml5.js’s handPose() function to detect hands in the live webcam feed. Around the detected hands, the grid cells would turn a yellowish tint, creating a subtle but striking effect. When I showed it to Andreas, he suggested making the yellow areas solid and overlaying some text to make the difference more noticeable. After a bit of tweaking—and some struggles with text alignment—I managed to get the design working smoothly. The combination of the reactive visuals and the text added a nice layer of interactivity to the screen.

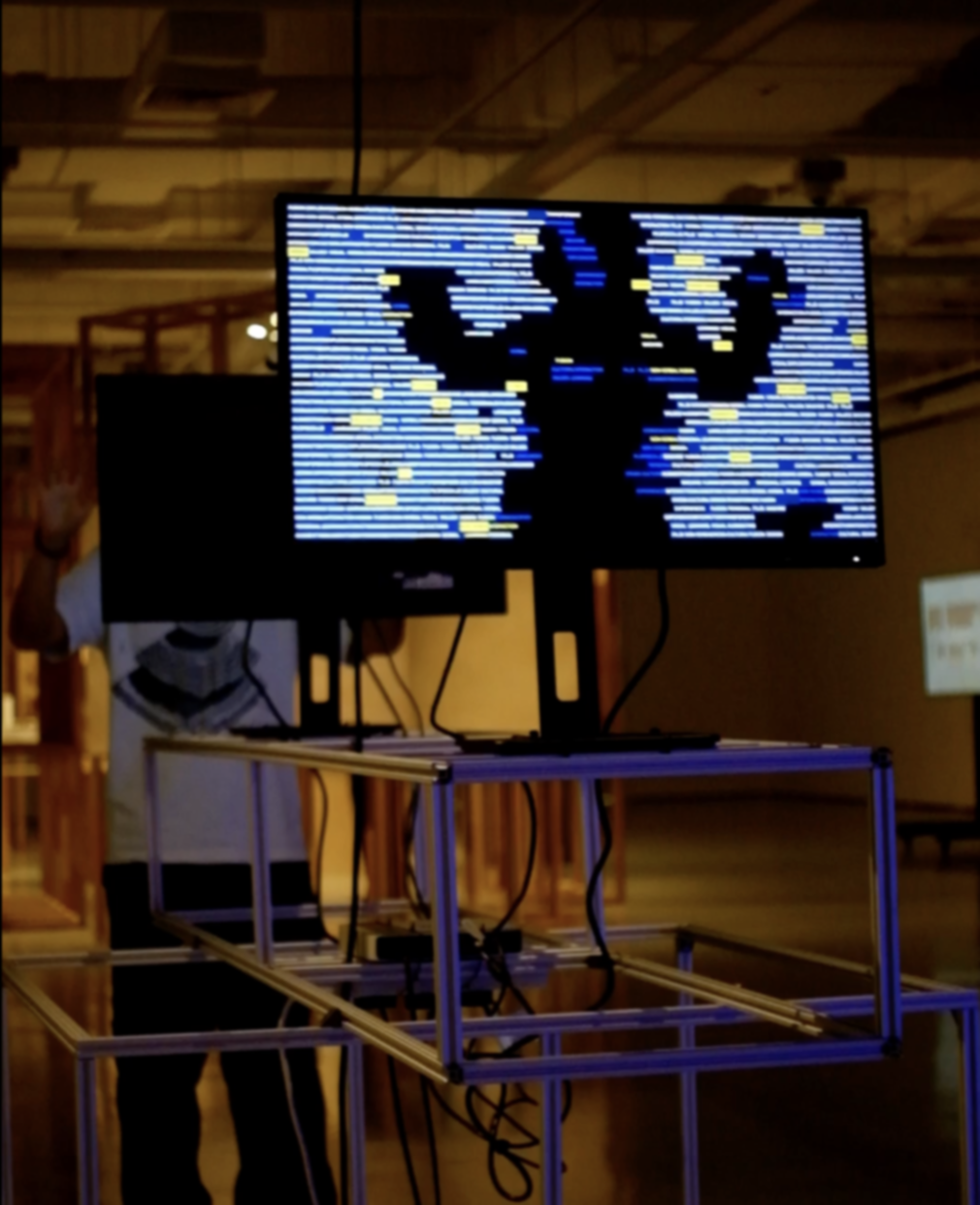

For the second screen, we opted for a more text-based outcome but wanted it to still feel connected to the first screen. I decided to use the same text for both, which would be pulled from the project descriptions for the exhibition. Inspired by Ksawery Komputery’s work, I used ml5’s body segmentation function to create a shadow-like effect where the changing text would fill the screen.

The twist? When someone stands in front of the other screen, their body contour appears as negative space on this one, creating a dynamic interplay between the visuals and the audience. This approach tied both screens together conceptually while also offering unique interactions for the viewers. Despite the challenges of last-minute adjustments, I’m excited about how the sketches are shaping up and can’t wait to see them in action during the exhibition.